Why Prompt-Driven Development Is a Liability

Speed is cheap. Stability is expensive.

How the C.O.D.E.S. Framework prevents AI technical debt.

AI has democratized code generation. Unfortunately, it has also democratized bad architecture.

Over the last year, the industry has rushed to adopt what we call Prompt-Driven Development. The promise is seductive: write a sentence, get a feature. For demos, this speed feels miraculous. For production systems, it often becomes a ticking time bomb.

We have spoken extensively about how we use AI tools to accelerate delivery. But we haven’t talked enough about what happens when they are used without rules.

The industry is now facing a new kind of technical debt: AI Sprawl.

At CodeSmiths, we believe that if AI can write code, the human’s job isn’t just to prompt it, it is to govern it. This is why prompt engineering alone is not enough, and how we address the problem through Governed AI Engineering.

source: A 2025 METR study found that while developers feel faster, they can actually be 19% slower

What Is AI Sprawl?

AI Sprawl is the silent killer of modern software projects. It occurs when the volume of AI-generated code outpaces a team’s ability to understand, maintain, and evolve it.

In traditional development, writing code was hard. Developers naturally tried to write less of it and reuse what already existed.

In AI-driven development, writing code is effectively free. This reverses the incentive structure: it becomes easier to generate new code than to reuse existing code.

Data from GitClear shows this trend is already leading to a massive surge in code churn and a decline in code quality across the industry.

The Anatomy of Sprawl: Why It Happens

AI Sprawl is not malicious, it is structural.

It happens for three specific reasons:

1. Context Amnesia (The “Groundhog Day” Effect)

Unless supported by an advanced framework, an AI model often lacks full awareness of your existing codebase. It does not know that you wrote a formatDate() utility three months ago. So when you request a date feature today, it creates a new formatting function.

Result:

You end up with 15 different ways to format dates in a single application. Changing the format later requires hunting down and fixing it in 15 different places.

2. The Dependency Trap

AI models are trained on the entire internet, including tutorials that rely on heavy libraries for trivial tasks. When asked to “sort a list,” an AI may import a 2MB library to perform something that native JavaScript can handle in a single line.

Result:

Application size explodes. We routinely see AI-generated apps that are 5× larger than necessary, leading to slower load times and higher hosting costs.

3. Cognitive Debt

Code is read far more often than it is written. When AI generates verbose or overly complex solutions for simple problems, it creates cognitive debt.

Future developers or even your own team six months later struggle to understand why the code exists in its current form.

Result:

Onboarding becomes painful. Teams are forced to reverse-engineer thousands of lines of machine-generated logic instead of understanding the system.

AI Sprawl doesn’t break your application on Day 1 It breaks your business on Day 100.

Gartner® predicts that unmanaged AI coding will lead to a 2500% increase in software defects by 2028,

Agile startups slowly turn into sluggish enterprises, where every new feature takes weeks because the foundation has become unmanageable.

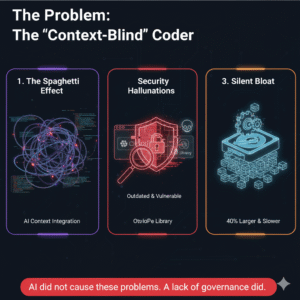

The Problem: The “Context-Blind” Coder

When developers rely solely on prompting a Large Language Model without a surrounding framework, they introduce invisible risks.

An AI model is powerful but it is amnesiac. It solves the immediate problem in the prompt, often ignoring the rest of the system.

Six months after launch, Prompt-Driven Development usually results in:

- The Spaghetti Effect: Multiple, inconsistent ways of connecting to databases created by different prompts.

- Security Hallucinations: Libraries that appear perfect but are outdated or contain known vulnerabilities.

- Silent Bloat: Features built in isolation that ignore existing utilities, leading to codebases that are 40% larger and slower than necessary.

AI did not cause these problems.

A lack of governance did.

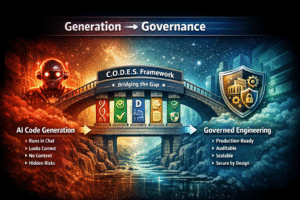

Moving from “Generation” to “Governance”

We don’t just vibe code.

We engineer.

There is a critical difference between code that runs and code that scales. To bridge that gap, Codesmiths uses the C.O.D.E.S. Framework.

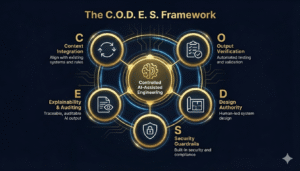

C — Context Integration (The Anti-Silo)

The Risk:

AI writes code in isolation, unaware of legacy systems or business rules.

The CodeSmiths Fix:

We inject your system’s DNA architecture patterns, technology constraints, and business logic into the AI’s context.

The AI doesn’t just write code;

it writes your code.

O — Output Verification (The Reality Check)

The Risk:

AI-generated code looks correct but fails under load or in edge cases.

The CodeSmiths Fix:

AI output is treated as a draft, not a deliverable. Code that passes in chat but fails in the IDE is rejected. We test beyond the happy path.

D — Design Authority (The Human Shield)

The Risk:

Allowing AI to decide architecture creates systems that cannot grow.

The CodeSmiths Fix:

Architectural blueprints are human-led. AI acts as the contractor; we remain the architects. Database schemas, APIs, and security models are defined before the first prompt is written.

E — Explainability & Auditing (The Paper Trail)

The Risk:

Black-box code that no one understands or can safely modify later.

The CodeSmiths Fix:

We document not just the “what,” but the “why.” Future teams should never have to reverse-engineer AI logic to make simple changes.

S — Security Guardrails (The Watchdog)

The Risk:

Supply-chain attacks via hallucinated packages or weak cryptography.

The CodeSmiths Fix:

Security is a constraint, not an afterthought. AI is restricted to approved libraries, and generated code is immediately scanned for vulnerabilities.

Research shows that AI-generated code contains security flaws or vulnerabilities 45% of the time

AI Changes Execution — Not Accountability

This is the core of our philosophy.

AI excels at execution:

- Writing boilerplate

- Refactoring code

- Exploring syntax and implementation options

AI does not:

- Understand business trade-offs

- Own long-term system health

- Take responsibility for data breaches

- Decide what should not be built

That responsibility remains human.

Speed Without Chaos

Clients don’t hire CodeSmiths simply because we use AI. Anyone can purchase a Copilot subscription.

They hire us because we use AI responsibly.

We are entering a world where code is cheap.

Trust, however, is becoming the most expensive resource in software engineering.

The future belongs to teams that build systems designed to survive not just scripts that happen to run.

Are you ready to build with speed, innovation, and total control?

Know more about relevant topics:

When Tom Cruise Speaks Hindi: